Why Does Your Computer Only Know Two Numbers?

Right now, as you read this sentence, billions of transistors in your CPU are flipping between two states — and from those humble coin-flips springs every photo, every song, every video game, every spreadsheet that has ever run on a digital computer. All of it. Just ones and zeros.

That sounds like either a miracle or a magic trick. It is actually neither — it is a deeply deliberate engineering decision, rooted in physics, mathematics, and one of the most celebrated intellectual insights of the twentieth century. Let us find out exactly why.

The Physical Reality: Voltage Is Messy

A transistor is, at its core, a voltage-controlled switch. You apply a voltage to the gate terminal, and current either flows or it does not. The transistor does not care about fine gradations — it is either on or off, conducting or not conducting.

Now imagine you want to store a decimal digit — 0 through 9 — in a single electronic element. You would need to distinguish ten different voltage levels: perhaps 0 V for 0, 0.5 V for 1, 1.0 V for 2, … 4.5 V for 9. That would work perfectly in a noise-free, ideal world.

The real world is not ideal.

The Noise Margin Argument

Every electronic circuit operates in an environment full of electromagnetic interference — from power supply ripple, nearby switching circuits, thermal noise in resistors, and capacitive coupling between wires. This noise adds and subtracts small voltages from your signal, constantly.

Consider the analogy of trying to measure a person’s height by looking at their shadow on a windy day. If you are trying to classify them into ten height buckets (160–161 cm, 161–162 cm, …), a small gust of wind makes the shadow dance and your measurement jumps between buckets. But if you only need to know “tall or short” — two buckets — a gust of wind has to be enormous to push you into the wrong category.

That is the noise margin argument in one paragraph.

With ten voltage levels spaced 0.5 V apart in a 5 V system, a noise spike of only 250 mV could corrupt a digit. With two voltage levels — LOW (near 0 V) and HIGH (near the supply voltage) — you have an entire volt or more of margin before a noise spike causes an error. Even better, digital logic circuits actively restore the signal: a gate that receives a “kind of HIGH but degraded” input will output a crisp, clean HIGH. The signal is regenerated at every stage.

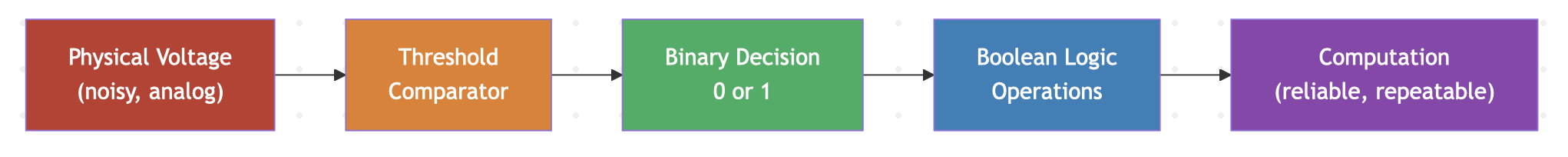

This pipeline — noisy physical voltage, threshold decision, binary abstraction, logic, computation — is the backbone of every digital system ever built.

A Brief History: Three Giants

The story of binary computing did not happen overnight. It took three centuries and three extraordinary minds.

Gottfried Wilhelm Leibniz, 1703

Leibniz, co-inventor of calculus and polymath extraordinaire, published his Explication de l’Arithmétique Binaire in 1703. He showed that all numbers could be represented using only 0 and 1, and that arithmetic could be performed in this system. He was enchanted by the philosophical implications — creation from nothing (0) and unity (1) — but the practical machinery to use his insight would not arrive for another 150 years.

George Boole, 1847

George Boole, a largely self-taught mathematician, published The Mathematical Analysis of Logic in 1847, followed by his landmark An Investigation of the Laws of Thought in 1854. Boole showed that logical reasoning — AND, OR, NOT — could be expressed as algebra operating on variables that take only two values: true and false, or equivalently 1 and 0.

Boolean algebra gave us a rigorous mathematical framework for reasoning about two-valued systems. But even Boole did not connect this to physical machinery.

Claude Shannon, 1937

Here is where the lightning bolt strikes.

💡 Insight: Claude Shannon’s 1937 MIT master’s thesis, “A Symbolic Analysis of Relay and Switching Circuits,” is frequently called the most important master’s thesis of the 20th century. Shannon proved that the algebra Boole invented for abstract logic could describe the behavior of physical electrical relay networks — directly and completely. This meant that you could design a circuit using symbolic math, verify it with algebra, and build it with switches. For the first time, logic and electronics were unified into a single engineering discipline. Every digital chip ever made rests on this foundation.

Shannon was 21 years old.

He had taken a philosophy course that covered Boolean algebra, and he was working in a telephone lab that used relay switches. The connection struck him: relay contacts open and close — that is AND and OR. The math already existed. Someone just needed to see that it described the hardware.

That someone was Shannon, and that moment arguably launched the digital age.

Bits and Bytes: Building Blocks of Everything

Once you commit to binary, you need vocabulary.

A bit (binary digit) is the smallest unit of information — a single choice between two states. Think of it as a single coin flip: heads or tails, 1 or 0. On its own, a bit can represent exactly two things.

A byte is 8 bits. Think of it as 8 coin flips in a row. How many distinct outcomes are there? $2^8 = 256$. A byte can encode 256 different values — enough for all the printable characters on a keyboard (and then some, which is exactly what ASCII exploited).

| Unit | Bits | Combinations |

|---|---|---|

| Bit | 1 | $2^1 = 2$ |

| Nibble | 4 | $2^4 = 16$ |

| Byte | 8 | $2^8 = 256$ |

| Word (16-bit) | 16 | $2^{16} = 65{,}536$ |

| Double Word | 32 | $2^{32} \approx 4.3 \times 10^9$ |

| Quad Word | 64 | $2^{64} \approx 1.8 \times 10^{19}$ |

That last number — $1.8 \times 10^{19}$ — is why a 64-bit address space can hold more data than you will ever realistically need. Your file system, your RAM, your network addresses: all carved from that 64-bit space.

Why Not Ternary? Or Decimal?

This question comes up constantly, and it deserves a direct answer.

Ternary (base-3) computers have been built — the Soviet Setun computer in 1958 used balanced ternary arithmetic and was genuinely elegant. The problem is manufacturing: building a reliable three-state transistor is significantly harder than a two-state one. The noise margins shrink. The logic gate design becomes more complex. And the gap in reliability means that the theoretical information-density advantage of ternary never materialized in practice.

Decimal computers existed in the early days (BCD — Binary Coded Decimal — is still used in financial applications). But a decimal transistor is a fiction; you always end up encoding decimal in binary anyway.

🔧 Real World: BCD (Binary Coded Decimal) encoding is still used in real-time clocks (RTC chips), financial calculations where exact decimal representation matters, and seven-segment display drivers. Your digital alarm clock almost certainly stores the time in BCD internally. But even these systems run on binary transistors — they just group the bits to represent decimal digits.

Binary won because it maps perfectly onto the physics of transistors. Two states. High. Low. On. Off. The hardware and the math align beautifully.

The Remarkable Consequence: Universality

Here is the thought that should stop you in your tracks.

Every song you have ever heard digitally was once sampled into a sequence of numbers — typically 44,100 samples per second, each a 16-bit integer. Those integers are stored as bits. The bits are read, processed by arithmetic circuits, and converted back to voltages that push a speaker cone.

Every photograph you have taken on a smartphone is a grid of pixels, each with three 8-bit numbers (red, green, blue intensity). Stored. Transmitted. Displayed. All as bits.

Every video game renders a scene by performing millions of floating-point multiplications per frame — each multiplication is binary arithmetic inside your GPU.

The astonishing power of digital systems is not just that they use binary. It is that any information can be encoded as numbers, and numbers can be encoded as bits. Leibniz saw the elegance. Boole built the algebra. Shannon bridged the gap to physics. And now you hold the result in your hand.

Summary

- Binary (two states) maps naturally onto transistor physics — HIGH and LOW voltages with large noise margins

- Ten-level (decimal) encoding is fragile; two-level encoding is robust and self-restoring

- Leibniz described binary arithmetic (1703), Boole formalized binary logic (1847), Shannon connected binary logic to switching circuits (1937)

- A bit is one binary choice; a byte is 8 bits encoding 256 values

- Every form of digital information — text, audio, video, computation — is ultimately sequences of bits

⚠️ Common Mistake: Students sometimes think “binary” means “simple” or “limited.” In reality, binary is a representation choice. A 64-bit number can take over $10^{19}$ different values — that is not a limitation, that is an engineering triumph achieved with cheap, fast, reliable transistors.

What’s Next

Now that you know why computers use binary, you need to become fluent in it — reading it, writing it, and converting between binary and its close cousins hexadecimal and octal. In the next post, we will learn to count in multiple bases and develop the intuition for positional number systems that every digital designer carries in their head automatically.